ReelRating – A Comprehensive Movie Rating Web Application

PROJECT TYPE | HCI and Software Development Project

TIMELINE | January – June 2023

ROLE | QA Tester and Usability Team Member

TEAM | 16 Members: Pezhman Raeisian Parvari; HCI Experts; Computer Scientists; Quality Assurance Specialists

TOOLS | Figma; GitHub; Manual Testing Tools

Overview:

The ReelRating project aimed to create a web application for rating movies, requiring collaboration among 16 individuals with expertise in HCI, computer science, and quality assurance. The team was structured into five groups: “Requirements,” “Usability,” “GUI,” “Engine-DB-Networking,” and “QA.”

PROBLEM STATEMENT

The ReelRating project emerged in response to the growing demand for reliable and user-friendly platforms for movie enthusiasts to share and access movie ratings and reviews. Recognizing the limitations of existing movie rating applications, particularly in terms of user experience and accuracy of ratings, our team sought to develop an innovative solution that would enhance both the reliability of movie ratings and the overall user interaction.

We identified key challenges in the current landscape, including inconsistent rating mechanisms, poor user interface design, and the lack of integration between various components of movie information (e.g., reviews, actor data, and movie details). We aimed to create a cohesive and intuitive web application that addresses these issues by providing users a seamless and engaging experience to rate, review, and explore movies.

By leveraging modern web development techniques and rigorous quality assurance practices, we aimed to improve the accuracy of movie ratings and offer a dynamic platform for users to connect with the movie community. Our approach involved extensive usability testing and agile planning to ensure that the final product met the diverse needs of our target audience, ultimately delivering a superior movie rating experience.

RESEARCH

USER GROUP | Movie Enthusiasts and General Audience

METHODOLOGY | Surveys, Interviews, Usability Testing

Project Initiation:

- Identified project goals, objectives, and stakeholders.

- Established a multidisciplinary team structure to cover various aspects of the project.

- Defined roles and responsibilities within the QA team.

Requirement Gathering:

We worked closely with the requirements team to understand and document functional and non-functional requirements for the application.

Contributed to refining user stories and acceptance criteria.

Scrum and Agile Planning:

Adopted the Scrum framework for project management.

Participated in sprint planning, backlog grooming, and weekly meetings to ensure alignment with project goals.

Collaborated with cross-functional teams to address issues and blockers.

Front-end and back-end testing:

TESTING APPROACH

STRATEGY | Comprehensive Manual and Automated Testing

FOCUS AREAS |

- Microservice Testing:

- Rating Microservice: Ensured accurate calculation and storage of movie ratings.

- Review Microservice: Validated the capture, storage, and retrieval of user reviews.

- Actor Microservice: Verified management of actor-related data, including biographies and filmographies.

- Movie Microservice: Tested functionalities like fetching movie details, genre classification, and related metadata.

- End-to-End Testing:

- Developed and executed test cases to cover all possible scenarios.

- Verified data integrity and consistency across microservices.

- Conducted load testing to evaluate scalability and performance.

- Usability Testing:

- Created and executed usability test cases to assess the application’s user-friendliness.

- Collected feedback from real users to identify areas for improvement.

- Iteratively refined the user experience based on testing results.

- Integration Testing:

- Ensured smooth integration of front-end and back-end components.

- Participated in continuous integration testing to maintain code integrity.

- Verified the successful deployment of new features and updates.

- Bug Tracking and Reporting:

- Utilized bug-tracking tools to document and prioritize identified issues.

- Collaborated with the development team to verify bug fixes.

- Provided regular updates on the status of testing efforts.

TOOLS | Manual Testing Tools, GitHub for Issue Tracking, Load Testing Tools

OUTCOMES |

- Ensured high reliability and performance of the ReelRating application.

- Enhanced collaboration between testing, development, and design teams.

- Delivered a user-friendly and robust movie rating web application.

Back-end Testing:

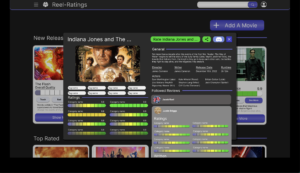

As a vital member of the QA team, I undertook comprehensive manual testing of the back-end components, specifically focusing on microservices integrated into the ReelRating application. The back-end comprised four distinct microservices, each serving a unique purpose:

Rating Microservice:

Conducted rigorous testing of the Rating Microservice to ensure accurate and reliable calculation and storage of movie ratings.Validated that the microservice correctly processed and updated ratings based on user input.

Review Microservice:

Collaborated with developers to test the Review Microservice, focusing on its ability to capture, store, and retrieve user reviews effectively.

Ensured seamless integration with the front-end components for displaying user reviews.

Actor Microservice:

Executed thorough testing of the Actor Microservice, verifying its capacity to manage actor-related data such as biographies, filmographies, and other relevant information.

Worked closely with the GUI team to guarantee proper presentation of actor details on the user interface.

Movie Microservice:

Played a key role in testing the Movie Microservice, emphasizing functionalities like fetching movie details, genre classification, and related metadata.

Assured the correct functioning of the microservice in collaboration with front-end counterparts to provide a cohesive user experience.

Backend endpoints

Part of the QA Test

Testing Approach:

- Developed and executed test cases based on microservices’ defined functionalities, ensuring coverage of all possible scenarios.

- Verified data integrity and consistency across microservices through extensive database testing.

- Collaborated with the Engine-DB networking team to validate proper communication and data flow between microservices.

- Participated in load testing to evaluate the scalability and performance of each microservice.

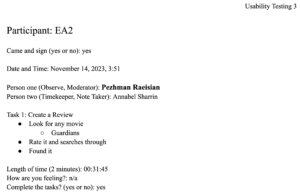

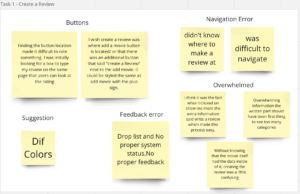

Usability Testing:

- Contributed to the usability team by executing usability test cases.

- collected feedback from real users to assess the application’s user-friendliness.

- Worked on improving the user experience based on usability testing results.

part of the Usability testing results

Personas

In our design process, we took deliberate steps to ensure our app resonated with the target audience. Crafting two fictional user personas allowed us to embody the characteristics of the movie enthusiasts expected to use the app, enabling a tailored design that aligns with their preferences. This meticulous approach extended to the user journey, where we carefully planned the steps users would take to seamlessly navigate and utilize the app.

Our design journey commenced with extensive brainstorming and research, culminating in the creation of these user personas:

Continuous Integration and Deployment:

- Collaborated with the Engine-DB-Networking team to ensure smooth integration of front-end and back-end components.

- Participated in continuous integration testing to maintain code integrity.

- Verified successful deployment of new features and updates.

Bug Tracking and Reporting:

- Utilized bug-tracking tools to document and prioritize identified issues.

- Collaborated with the development team to verify bug fixes.

- Provided regular updates on the status of testing efforts.

GitHub Issues

Documentation:

- Contributed to creating comprehensive documentation, including test plans, test cases, and user guides.

- Ensured documentation reflected any changes or updates made during the project lifecycle.

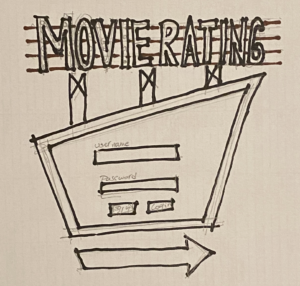

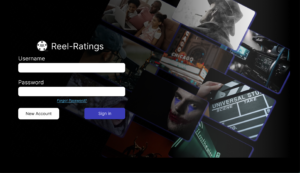

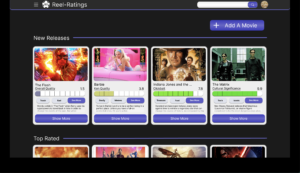

Designing Wireframes and Prototypes:

In addition to my role in quality assurance, I actively contributed to the initial phases of the ReelRating project by participating in the design process. As a member of the usability team, I played a key role in the creation of wireframes and prototypes in collaboration with the GUI and usability tests.

Wireframe Creation:

- Collaborated with the GUI team to understand and translate high-level requirements into detailed wireframes.

- Utilized design tools such as Figma to create wireframes that outlined the basic structure and layout of the ReelRating web application.

wireframes

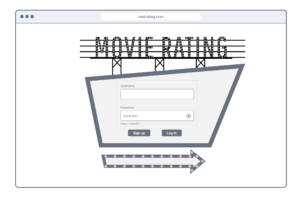

Prototyping:

- Developed interactive prototypes to provide stakeholders with a tangible representation of the user interface and navigation flow.

- Worked closely with the Usability team to incorporate feedback from initial prototype testing and refine designs for optimal user interaction.

- Collaborated with the Engine-DB networking team to validate the feasibility of implementing the proposed design elements.

Hi-fidelity prototype

User Feedback Integration:

- Actively participated in usability testing sessions with real users to gather insights on the effectiveness of the wireframes and prototypes.

- Iteratively refined designs based on user feedback, focusing on enhancing user experience and addressing usability concerns.

- Presented updated wireframes and prototypes to the wider team, incorporating valuable input from cross-functional collaborators.

Alignment with Development:

- Worked closely with developers to ensure that the finalized prototypes aligned with the technical capabilities of the back-end microservices and overall architecture.

- Facilitated clear communication between the design and development teams, ensuring a seamless transition from prototype to implementation.

Key Outcomes:

- Contributed to the creation of well-defined wireframes and prototypes that served as a visual foundation for the ReelRating project.

- Improved collaboration between design, development, and testing teams, fostering a cohesive and efficient workflow.

Enhanced the overall user experience by incorporating user feedback into the design process.

Project Closure:

- Conducted a retrospective meeting to review the project’s successes, challenges, and areas for improvement.

- Contributed to the creation of a final project report for stakeholders.

THINGS I LEARNED

Collaboration:

- Improved teamwork and communication within a multidisciplinary team.

- Coordinated effectively with different teams to ensure seamless integration.

Agile Methodologies:

- Gained experience with Scrum and Agile planning.

- Adapted quickly to changes and addressed blockers efficiently.

Quality Assurance:

- Developed skills in manual and automated testing.

- Created and executed comprehensive test cases, ensuring full coverage and performance evaluation.

User Experience Design:

- Contributed to wireframe and prototype creation using Figma.

- Incorporated user feedback to refine designs and enhance the user experience.

Technical Skills:

- Improved knowledge in testing back-end microservices and ensuring data integrity.

- Used GitHub for issue tracking and documentation.

Research:

- Conducted research on user preferences and developed user personas.

- Created user journeys to ensure the app met target audience needs.

Documentation:

- Created comprehensive documentation, including test plans and user guides.

- Documented and prioritized issues using bug-tracking tools.

The ReelRating project enhanced my skills in quality assurance, user experience design, and agile project management, emphasizing user-centric design and effective team collaboration.